The Temperature Of The UK Over The Last 100 Years (part 5)

A butcher's at daily maxima and minima over the last 100 years. Are things getting worse, and what does ‘worse’ mean?

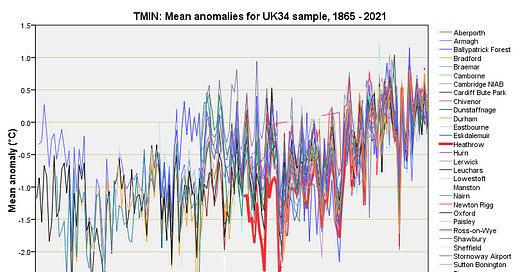

Right then, it’s 7:40am and I’ve cleared away the rotting apples, gathered the decent ones and put the recycling bins out moments before the truck hauls into our sleepy close. I’ve a hot lemon tea to my right that is smacking my taste buds good and proper and I’ve a spreadsheet template open ready to crunch tmin (mean daily minimum temperature) for the 34 stations in my sample, data for which may be obtained here.

The tmin normals are relatively easy to calculate and I recommend subscribers go right back to this newsletter, this newsletter, this newsletter, and this newsletter to better understand what temperature anomalies mean, how they get calculated, how to correctly interpret them, and why I’m using the 1991-2020 climatological normal for my sample of stations and not the current WMO 1961-1990 normal.

Tmin & Sharks

Just in case folk are confused as to what tmin actually means, here’s the definition provided by the Met Office:

I’ll flesh this out by saying the lowest daily temperature…